Pacemaker Explained¶

Configuring Pacemaker Clusters

Abstract¶

This document definitively explains Pacemaker’s features and capabilities, particularly the XML syntax used in Pacemaker’s Cluster Information Base (CIB).

Table of Contents¶

1. Introduction¶

1.1. The Scope of this Document¶

This document is intended to be an exhaustive reference for configuring Pacemaker. To achieve this, it focuses on the XML syntax used to configure the CIB.

For those that are allergic to XML, multiple higher-level front-ends (both command-line and GUI) are available. These tools will not be covered in this document, though the concepts explained here should make the functionality of these tools more easily understood.

Users may be interested in other parts of the Pacemaker documentation set, such as Clusters from Scratch, a step-by-step guide to setting up an example cluster, and Pacemaker Administration, a guide to maintaining a cluster.

1.2. What Is Pacemaker?¶

Pacemaker is a high-availability cluster resource manager – software that runs on a set of hosts (a cluster of nodes) in order to preserve integrity and minimize downtime of desired services (resources). [1] It is maintained by the ClusterLabs community.

Pacemaker’s key features include:

- Detection of and recovery from node- and service-level failures

- Ability to ensure data integrity by fencing faulty nodes

- Support for one or more nodes per cluster

- Support for multiple resource interface standards (anything that can be scripted can be clustered)

- Support (but no requirement) for shared storage

- Support for practically any redundancy configuration (active/passive, N+1, etc.)

- Automatically replicated configuration that can be updated from any node

- Ability to specify cluster-wide relationships between services, such as ordering, colocation, and anti-colocation

- Support for advanced service types, such as clones (services that need to be active on multiple nodes), promotable clones (clones that can run in one of two roles), and containerized services

- Unified, scriptable cluster management tools

Note

Fencing

Fencing, also known as STONITH (an acronym for Shoot The Other Node In The Head), is the ability to ensure that it is not possible for a node to be running a service. This is accomplished via fence devices such as intelligent power switches that cut power to the target, or intelligent network switches that cut the target’s access to the local network.

Pacemaker represents fence devices as a special class of resource.

A cluster cannot safely recover from certain failure conditions, such as an unresponsive node, without fencing.

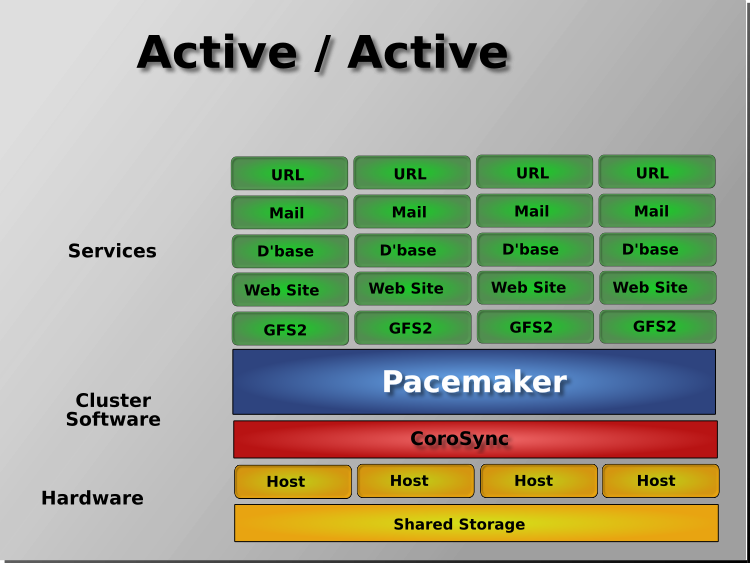

1.2.1. Cluster Architecture¶

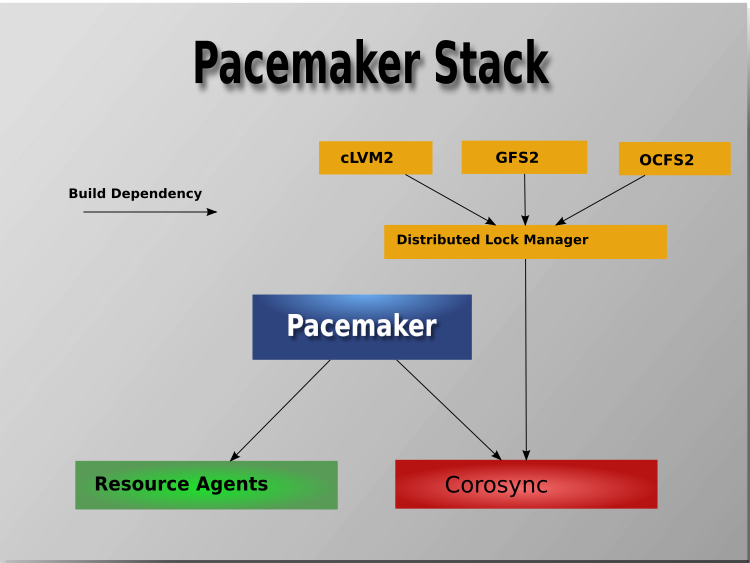

At a high level, a cluster can be viewed as having these parts (which together are often referred to as the cluster stack):

- Resources: These are the reason for the cluster’s being – the services that need to be kept highly available.

- Resource agents: These are scripts or operating system components that start, stop, and monitor resources, given a set of resource parameters. These provide a uniform interface between Pacemaker and the managed services.

- Fence agents: These are scripts that execute node fencing actions, given a target and fence device parameters.

- Cluster membership layer: This component provides reliable messaging, membership, and quorum information about the cluster. Currently, Pacemaker supports Corosync as this layer.

- Cluster resource manager: Pacemaker provides the brain that processes and reacts to events that occur in the cluster. These events may include nodes joining or leaving the cluster; resource events caused by failures, maintenance, or scheduled activities; and other administrative actions. To achieve the desired availability, Pacemaker may start and stop resources and fence nodes.

- Cluster tools: These provide an interface for users to interact with the cluster. Various command-line and graphical (GUI) interfaces are available.

Most managed services are not, themselves, cluster-aware. However, many popular open-source cluster filesystems make use of a common Distributed Lock Manager (DLM), which makes direct use of Corosync for its messaging and membership capabilities and Pacemaker for the ability to fence nodes.

1.2.2. Pacemaker Architecture¶

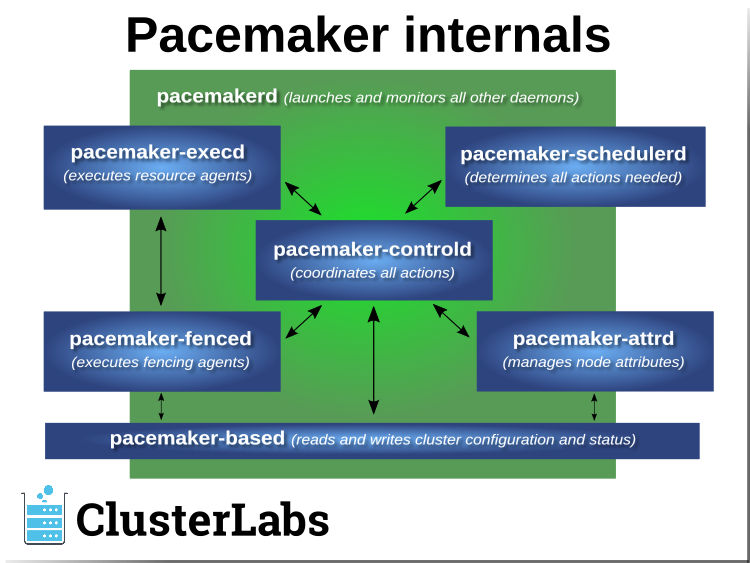

Pacemaker itself is composed of multiple daemons that work together:

pacemakerdpacemaker-attrdpacemaker-basedpacemaker-controldpacemaker-execdpacemaker-fencedpacemaker-schedulerd

Pacemaker’s main process (pacemakerd) spawns all the other daemons, and

respawns them if they unexpectedly exit.

The Cluster Information Base (CIB) is an

XML representation of the cluster’s

configuration and the state of all nodes and resources. The CIB manager

(pacemaker-based) keeps the CIB synchronized across the cluster, and

handles requests to modify it.

The attribute manager (pacemaker-attrd) maintains a database of

attributes for all nodes, keeps it synchronized across the cluster, and handles

requests to modify them. These attributes are usually recorded in the CIB.

Given a snapshot of the CIB as input, the scheduler

(pacemaker-schedulerd) determines what actions are necessary to achieve the

desired state of the cluster.

The local executor (pacemaker-execd) handles requests to execute

resource agents on the local cluster node, and returns the result.

The fencer (pacemaker-fenced) handles requests to fence nodes. Given a

target node, the fencer decides which cluster node(s) should execute which

fencing device(s), and calls the necessary fencing agents (either directly, or

via requests to the fencer peers on other nodes), and returns the result.

The controller (pacemaker-controld) is Pacemaker’s coordinator,

maintaining a consistent view of the cluster membership and orchestrating all

the other components.

Pacemaker centralizes cluster decision-making by electing one of the controller instances as the Designated Controller (DC). Should the elected DC process (or the node it is on) fail, a new one is quickly established. The DC responds to cluster events by taking a current snapshot of the CIB, feeding it to the scheduler, then asking the executors (either directly on the local node, or via requests to controller peers on other nodes) and the fencer to execute any necessary actions.

Note

Old daemon names

The Pacemaker daemons were renamed in version 2.0. You may still find references to the old names, especially in documentation targeted to version 1.1.

| Old name | New name |

|---|---|

attrd |

pacemaker-attrd |

cib |

pacemaker-based |

crmd |

pacemaker-controld |

lrmd |

pacemaker-execd |

stonithd |

pacemaker-fenced |

pacemaker_remoted |

pacemaker-remoted |

1.2.3. Node Redundancy Designs¶

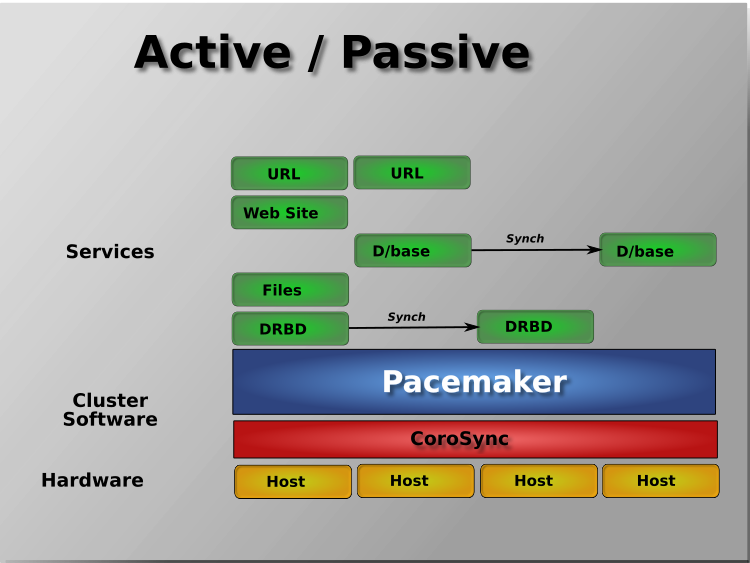

Pacemaker supports practically any node redundancy configuration including Active/Active, Active/Passive, N+1, N+M, N-to-1, and N-to-N.

Active/passive clusters with two (or more) nodes using Pacemaker and DRBD are a cost-effective high-availability solution for many situations. One of the nodes provides the desired services, and if it fails, the other node takes over.

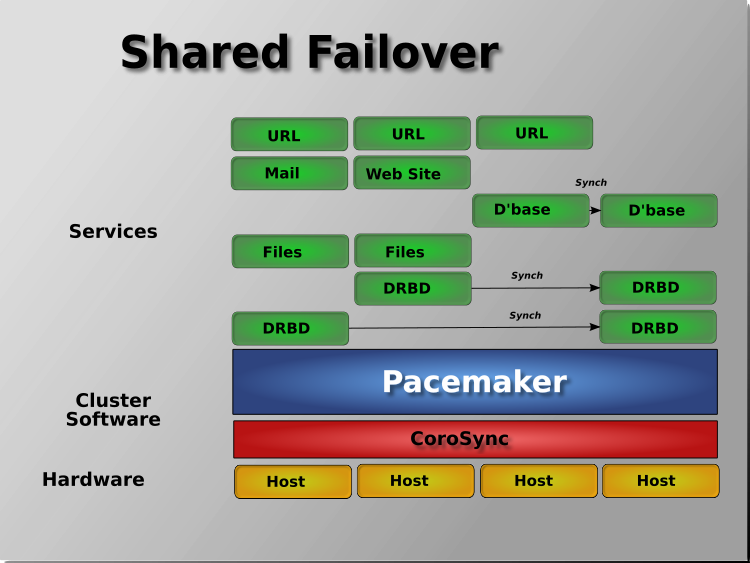

Pacemaker also supports multiple nodes in a shared-failover design, reducing hardware costs by allowing several active/passive clusters to be combined and share a common backup node.

When shared storage is available, every node can potentially be used for failover. Pacemaker can even run multiple copies of services to spread out the workload. This is sometimes called N-to-N redundancy.

Footnotes

| [1] | Cluster is sometimes used in other contexts to refer to hosts grouped together for other purposes, such as high-performance computing (HPC), but Pacemaker is not intended for those purposes. |

2. Cluster-Wide Configuration¶

2.1. Configuration Layout¶

The cluster is defined by the Cluster Information Base (CIB), which uses XML notation. The simplest CIB, an empty one, looks like this:

An empty configuration

<cib crm_feature_set="3.6.0" validate-with="pacemaker-3.5" epoch="1" num_updates="0" admin_epoch="0">

<configuration>

<crm_config/>

<nodes/>

<resources/>

<constraints/>

</configuration>

<status/>

</cib>

The empty configuration above contains the major sections that make up a CIB:

cib: The entire CIB is enclosed with acibelement. Certain fundamental settings are defined as attributes of this element.configuration: This section – the primary focus of this document – contains traditional configuration information such as what resources the cluster serves and the relationships among them.crm_config: cluster-wide configuration optionsnodes: the machines that host the clusterresources: the services run by the clusterconstraints: indications of how resources should be placed

status: This section contains the history of each resource on each node. Based on this data, the cluster can construct the complete current state of the cluster. The authoritative source for this section is the local executor (pacemaker-execd process) on each cluster node, and the cluster will occasionally repopulate the entire section. For this reason, it is never written to disk, and administrators are advised against modifying it in any way.

In this document, configuration settings will be described as properties or options based on how they are defined in the CIB:

- Properties are XML attributes of an XML element.

- Options are name-value pairs expressed as

nvpairchild elements of an XML element.

Normally, you will use command-line tools that abstract the XML, so the distinction will be unimportant; both properties and options are cluster settings you can tweak.

2.2. CIB Properties¶

Certain settings are defined by CIB properties (that is, attributes of the

cib tag) rather than with the rest of the cluster configuration in the

configuration section.

The reason is simply a matter of parsing. These options are used by the configuration database which is, by design, mostly ignorant of the content it holds. So the decision was made to place them in an easy-to-find location.

| Attribute | Description |

|---|---|

| admin_epoch | When a node joins the cluster, the cluster performs a

check to see which node has the best configuration. It

asks the node with the highest ( Warning: Never set this value to zero. In such cases, the cluster cannot tell the difference between your configuration and the “empty” one used when nothing is found on disk. |

| epoch | The cluster increments this every time the configuration is updated (usually by the administrator). |

| num_updates | The cluster increments this every time the configuration or status is updated (usually by the cluster) and resets it to 0 when epoch changes. |

| validate-with | Determines the type of XML validation that will be done

on the configuration. If set to |

| cib-last-written | Indicates when the configuration was last written to disk. Maintained by the cluster; for informational purposes only. |

| have-quorum | Indicates if the cluster has quorum. If false, this may

mean that the cluster cannot start resources or fence

other nodes (see |

| dc-uuid | Indicates which cluster node is the current leader. Used by the cluster when placing resources and determining the order of some events. Maintained by the cluster. |

2.3. Cluster Options¶

Cluster options, as you might expect, control how the cluster behaves when confronted with various situations.

They are grouped into sets within the crm_config section. In advanced

configurations, there may be more than one set. (This will be described later

in the chapter on Rules where we will show how to have the cluster use

different sets of options during working hours than during weekends.) For now,

we will describe the simple case where each option is present at most once.

You can obtain an up-to-date list of cluster options, including their default

values, by running the man pacemaker-schedulerd and

man pacemaker-controld commands.

| Option | Default | Description |

|---|---|---|

| cluster-name | An (optional) name for the cluster as a whole.

This is mostly for users’ convenience for use

as desired in administration, but this can be

used in the Pacemaker configuration in

Rules (as the |

|

| dc-version | Version of Pacemaker on the cluster’s DC. Determined automatically by the cluster. Often includes the hash which identifies the exact Git changeset it was built from. Used for diagnostic purposes. |

|

| cluster-infrastructure | The messaging stack on which Pacemaker is currently running. Determined automatically by the cluster. Used for informational and diagnostic purposes. |

|

| no-quorum-policy | stop | What to do when the cluster does not have quorum. Allowed values:

|

| batch-limit | 0 | The maximum number of actions that the cluster may execute in parallel across all nodes. The “correct” value will depend on the speed and load of your network and cluster nodes. If zero, the cluster will impose a dynamically calculated limit only when any node has high load. If -1, the cluster will not impose any limit. |

| migration-limit | -1 | The number of live migration actions that the cluster is allowed to execute in parallel on a node. A value of -1 means unlimited. |

| symmetric-cluster | true | Whether resources can run on any node by default (if false, a resource is allowed to run on a node only if a location constraint enables it) |

| stop-all-resources | false | Whether all resources should be disallowed from running (can be useful during maintenance) |

| stop-orphan-resources | true | Whether resources that have been deleted from

the configuration should be stopped. This value

takes precedence over |

| stop-orphan-actions | true | Whether recurring operations that have been deleted from the configuration should be cancelled |

| start-failure-is-fatal | true | Whether a failure to start a resource on a

particular node prevents further start attempts

on that node? If |

| enable-startup-probes | true | Whether the cluster should check the pre-existing state of resources when the cluster starts |

| maintenance-mode | false | Whether the cluster should refrain from monitoring, starting and stopping resources |

| stonith-enabled | true | Whether the cluster is allowed to fence nodes (for example, failed nodes and nodes with resources that can’t be stopped. If true, at least one fence device must be configured before resources are allowed to run. If false, unresponsive nodes are immediately assumed to be running no resources, and resource recovery on online nodes starts without any further protection (which can mean data loss if the unresponsive node still accesses shared storage, for example). See also the requires resource meta-attribute. |

| stonith-action | reboot | Action the cluster should send to the fence agent

when a node must be fenced. Allowed values are

|

| stonith-timeout | 60s | How long to wait for |

| stonith-max-attempts | 10 | How many times fencing can fail for a target before the cluster will no longer immediately re-attempt it. |

| stonith-watchdog-timeout | 0 | If nonzero, and the cluster detects

If this is set to a positive value, unseen nodes are assumed to self-fence within this much time. Warning: It must be ensured that this value is

larger than the If this is set to a negative value, and

Warning: In this case, it is essential (and

currently not verified by pacemaker) that

|

| concurrent-fencing | false | Whether the cluster is allowed to initiate

multiple fence actions concurrently. Fence actions

initiated externally, such as via the

|

| fence-reaction | stop | How should a cluster node react if notified of its

own fencing? A cluster node may receive

notification of its own fencing if fencing is

misconfigured, or if fabric fencing is in use that

doesn’t cut cluster communication. Allowed values

are |

| priority-fencing-delay | 0 | Apply this delay to any fencing targeting the lost

nodes with the highest total resource priority in

case we don’t have the majority of the nodes in

our cluster partition, so that the more

significant nodes potentially win any fencing

match (especially meaningful in a split-brain of a

2-node cluster). A promoted resource instance

takes the resource’s priority plus 1 if the

resource’s priority is not 0. Any static or random

delays introduced by |

| cluster-delay | 60s | Estimated maximum round-trip delay over the network (excluding action execution). If the DC requires an action to be executed on another node, it will consider the action failed if it does not get a response from the other node in this time (after considering the action’s own timeout). The “correct” value will depend on the speed and load of your network and cluster nodes. |

| dc-deadtime | 20s | How long to wait for a response from other nodes during startup. The “correct” value will depend on the speed/load of your network and the type of switches used. |

| cluster-ipc-limit | 500 | The maximum IPC message backlog before one cluster daemon will disconnect another. This is of use in large clusters, for which a good value is the number of resources in the cluster multiplied by the number of nodes. The default of 500 is also the minimum. Raise this if you see “Evicting client” messages for cluster daemon PIDs in the logs. |

| pe-error-series-max | -1 | The number of scheduler inputs resulting in errors to save. Used when reporting problems. A value of -1 means unlimited (report all), and 0 means none. |

| pe-warn-series-max | 5000 | The number of scheduler inputs resulting in warnings to save. Used when reporting problems. A value of -1 means unlimited (report all), and 0 means none. |

| pe-input-series-max | 4000 | The number of “normal” scheduler inputs to save. Used when reporting problems. A value of -1 means unlimited (report all), and 0 means none. |

| enable-acl | false | Whether Access Control Lists (ACLs) should be used to authorize modifications to the CIB |

| placement-strategy | default | How the cluster should allocate resources to nodes

(see Utilization and Placement Strategy). Allowed values are

|

| node-health-strategy | none | How the cluster should react to node health

attributes (see Tracking Node Health). Allowed values

are |

| node-health-base | 0 | The base health score assigned to a node. Only

used when |

| node-health-green | 0 | The score to use for a node health attribute whose

value is |

| node-health-yellow | 0 | The score to use for a node health attribute whose

value is |

| node-health-red | 0 | The score to use for a node health attribute whose

value is |

| cluster-recheck-interval | 15min | Pacemaker is primarily event-driven, and looks

ahead to know when to recheck the cluster for

failure timeouts and most time-based rules

(since 2.0.3). However, it will also recheck the

cluster after this amount of inactivity. This has

two goals: rules with |

| shutdown-lock | false | The default of false allows active resources to be

recovered elsewhere when their node is cleanly

shut down, which is what the vast majority of

users will want. However, some users prefer to

make resources highly available only for failures,

with no recovery for clean shutdowns. If this

option is true, resources active on a node when it

is cleanly shut down are kept “locked” to that

node (not allowed to run elsewhere) until they

start again on that node after it rejoins (or for

at most |

| shutdown-lock-limit | 0 | If |

| remove-after-stop | false | Deprecated Should the cluster remove resources from Pacemaker’s executor after they are stopped? Values other than the default are, at best, poorly tested and potentially dangerous. This option is deprecated and will be removed in a future release. |

| startup-fencing | true | Advanced Use Only: Should the cluster fence unseen nodes at start-up? Setting this to false is unsafe, because the unseen nodes could be active and running resources but unreachable. |

| election-timeout | 2min | Advanced Use Only: If you need to adjust this value, it probably indicates the presence of a bug. |

| shutdown-escalation | 20min | Advanced Use Only: If you need to adjust this value, it probably indicates the presence of a bug. |

| join-integration-timeout | 3min | Advanced Use Only: If you need to adjust this value, it probably indicates the presence of a bug. |

| join-finalization-timeout | 30min | Advanced Use Only: If you need to adjust this value, it probably indicates the presence of a bug. |

| transition-delay | 0s | Advanced Use Only: Delay cluster recovery for the configured interval to allow for additional or related events to occur. This can be useful if your configuration is sensitive to the order in which ping updates arrive. Enabling this option will slow down cluster recovery under all conditions. |

3. Cluster Nodes¶

3.1. Defining a Cluster Node¶

Each cluster node will have an entry in the nodes section containing at

least an ID and a name. A cluster node’s ID is defined by the cluster layer

(Corosync).

Example Corosync cluster node entry

<node id="101" uname="pcmk-1"/>

In normal circumstances, the admin should let the cluster populate this information automatically from the cluster layer.

3.1.1. Where Pacemaker Gets the Node Name¶

The name that Pacemaker uses for a node in the configuration does not have to be the same as its local hostname. Pacemaker uses the following for a Corosync node’s name, in order of most preferred first:

- The value of

namein thenodelistsection ofcorosync.conf - The value of

ring0_addrin thenodelistsection ofcorosync.conf - The local hostname (value of

uname -n)

If the cluster is running, the crm_node -n command will display the local

node’s name as used by the cluster.

If a Corosync nodelist is used, crm_node --name-for-id with a Corosync

node ID will display the name used by the node with the given Corosync

nodeid, for example:

crm_node --name-for-id 2

3.2. Node Attributes¶

Pacemaker allows node-specific values to be specified using node attributes. A node attribute has a name, and may have a distinct value for each node.

Node attributes come in two types, permanent and transient. Permanent node

attributes are kept within the node entry, and keep their values even if

the cluster restarts on a node. Transient node attributes are kept in the CIB’s

status section, and go away when the cluster stops on the node.

While certain node attributes have specific meanings to the cluster, they are mainly intended to allow administrators and resource agents to track any information desired.

For example, an administrator might choose to define node attributes for how much RAM and disk space each node has, which OS each uses, or which server room rack each node is in.

Users can configure Rules that use node attributes to affect where resources are placed.

3.2.1. Setting and querying node attributes¶

Node attributes can be set and queried using the crm_attribute and

attrd_updater commands, so that the user does not have to deal with XML

configuration directly.

Here is an example command to set a permanent node attribute, and the XML configuration that would be generated:

Result of using crm_attribute to specify which kernel pcmk-1 is running

# crm_attribute --type nodes --node pcmk-1 --name kernel --update $(uname -r)

<node id="1" uname="pcmk-1">

<instance_attributes id="nodes-1-attributes">

<nvpair id="nodes-1-kernel" name="kernel" value="3.10.0-862.14.4.el7.x86_64"/>

</instance_attributes>

</node>

To read back the value that was just set:

# crm_attribute --type nodes --node pcmk-1 --name kernel --query

scope=nodes name=kernel value=3.10.0-862.14.4.el7.x86_64

The --type nodes indicates that this is a permanent node attribute;

--type status would indicate a transient node attribute.

3.2.2. Special node attributes¶

Certain node attributes have special meaning to the cluster.

Node attribute names beginning with # are considered reserved for these

special attributes. Some special attributes do not start with #, for

historical reasons.

Certain special attributes are set automatically by the cluster, should never be modified directly, and can be used only within Rules; these are listed under built-in node attributes.

For true/false values, the cluster considers a value of “1”, “y”, “yes”, “on”, or “true” (case-insensitively) to be true, “0”, “n”, “no”, “off”, “false”, or unset to be false, and anything else to be an error.

| Name | Description |

|---|---|

| fail-count-* | Attributes whose names start with

|

| last-failure-* | Attributes whose names start with

|

| maintenance | Similar to the Warning: Restarting pacemaker on a node that is

in single-node maintenance mode will likely

lead to undesirable effects. If

|

| probe_complete | This is managed by the cluster to detect when nodes need to be reprobed, and should never be used directly. |

| resource-discovery-enabled | If the node is a remote node, fencing is enabled,

and this attribute is explicitly set to false

(unset means true in this case), resource discovery

(probes) will not be done on this node. This is

highly discouraged; the |

| shutdown | This is managed by the cluster to orchestrate the shutdown of a node, and should never be used directly. |

| site-name | If set, this will be used as the value of the

|

| standby | If true, the node is in standby mode. This is

typically set and queried via the |

| terminate | If the value is true or begins with any nonzero number, the node will be fenced. This is typically set by tools rather than directly. |

| #digests-* | Attributes whose names start with |

| #node-unfenced | When the node was last unfenced (as seconds since the epoch). This is managed by the cluster and should never be used directly. |

3.3. Tracking Node Health¶

A node may be functioning adequately as far as cluster membership is concerned, and yet be “unhealthy” in some respect that makes it an undesirable location for resources. For example, a disk drive may be reporting SMART errors, or the CPU may be highly loaded.

Pacemaker offers a way to automatically move resources off unhealthy nodes.

3.3.1. Node Health Attributes¶

Pacemaker will treat any node attribute whose name starts with #health as

an indicator of node health. Node health attributes may have one of the

following values:

| Value | Intended significance |

|---|---|

red |

This indicator is unhealthy |

yellow |

This indicator is becoming unhealthy |

green |

This indicator is healthy |

| integer | A numeric score to apply to all resources on this node (0 or positive is healthy, negative is unhealthy) |

3.3.2. Node Health Strategy¶

Pacemaker assigns a node health score to each node, as the sum of the values of all its node health attributes. This score will be used as a location constraint applied to this node for all resources.

The node-health-strategy cluster option controls how Pacemaker responds to

changes in node health attributes, and how it translates red, yellow,

and green to scores.

Allowed values are:

| Value | Effect |

|---|---|

| none | Do not track node health attributes at all. |

| migrate-on-red | Assign the value of |

| only-green | Assign the value of |

| progressive | Assign the value of the |

| custom | Track node health attributes using the same values as

|

3.3.3. Exempting a Resource from Health Restrictions¶

If you want a resource to be able to run on a node even if its health score

would otherwise prevent it, set the resource’s allow-unhealthy-nodes

meta-attribute to true (available since 2.1.3).

This is particularly useful for node health agents, to allow them to detect when the node becomes healthy again. If you configure a health agent without this setting, then the health agent will be banned from an unhealthy node, and you will have to investigate and clear the health attribute manually once it is healthy to allow resources on the node again.

If you want the meta-attribute to apply to a clone, it must be set on the clone itself, not on the resource being cloned.

3.3.4. Configuring Node Health Agents¶

Since Pacemaker calculates node health based on node attributes, any method that sets node attributes may be used to measure node health. The most common are resource agents and custom daemons.

Pacemaker provides examples that can be used directly or as a basis for custom

code. The ocf:pacemaker:HealthCPU, ocf:pacemaker:HealthIOWait, and

ocf:pacemaker:HealthSMART resource agents set node health attributes based

on CPU and disk status.

To take advantage of this feature, add the resource to your cluster (generally as a cloned resource with a recurring monitor action, to continually check the health of all nodes). For example:

Example HealthIOWait resource configuration

<clone id="resHealthIOWait-clone">

<primitive class="ocf" id="HealthIOWait" provider="pacemaker" type="HealthIOWait">

<instance_attributes id="resHealthIOWait-instance_attributes">

<nvpair id="resHealthIOWait-instance_attributes-red_limit" name="red_limit" value="30"/>

<nvpair id="resHealthIOWait-instance_attributes-yellow_limit" name="yellow_limit" value="10"/>

</instance_attributes>

<operations>

<op id="resHealthIOWait-monitor-interval-5" interval="5" name="monitor" timeout="5"/>

<op id="resHealthIOWait-start-interval-0s" interval="0s" name="start" timeout="10s"/>

<op id="resHealthIOWait-stop-interval-0s" interval="0s" name="stop" timeout="10s"/>

</operations>

</primitive>

</clone>

The resource agents use attrd_updater to set proper status for each node

running this resource, as a node attribute whose name starts with #health

(for HealthIOWait, the node attribute is named #health-iowait).

When a node is no longer faulty, you can force the cluster to make it available to take resources without waiting for the next monitor, by setting the node health attribute to green. For example:

Force node1 to be marked as healthy

# attrd_updater --name "#health-iowait" --update "green" --node "node1"

4. Cluster Resources¶

4.1. What is a Cluster Resource?¶

A resource is a service managed by Pacemaker. The simplest type of resource, a primitive, is described in this chapter. More complex forms, such as groups and clones, are described in later chapters.

Every primitive has a resource agent that provides Pacemaker a standardized interface for managing the service. This allows Pacemaker to be agnostic about the services it manages. Pacemaker doesn’t need to understand how the service works because it relies on the resource agent to do the right thing when asked.

Every resource has a class specifying the standard that its resource agent follows, and a type identifying the specific service being managed.

4.2. Resource Classes¶

Pacemaker supports several classes, or standards, of resource agents:

- OCF

- LSB

- Systemd

- Upstart (deprecated)

- Service

- Fencing

- Nagios

4.2.1. Open Cluster Framework¶

The Open Cluster Framework (OCF) Resource Agent API is a ClusterLabs standard for managing services. It is the most preferred since it is specifically designed for use in a Pacemaker cluster.

OCF agents are scripts that support a variety of actions including start,

stop, and monitor. They may accept parameters, making them more

flexible than other classes. The number and purpose of parameters is left to

the agent, which advertises them via the meta-data action.

Unlike other classes, OCF agents have a provider as well as a class and type.

For more information, see the “Resource Agents” chapter of Pacemaker Administration and the OCF standard.

4.2.2. Systemd¶

Most Linux distributions use Systemd for system initialization and service management. Unit files specify how to manage services and are usually provided by the distribution.

Pacemaker can manage systemd services. Simply create a resource with

systemd as the resource class and the unit file name as the resource type.

Do not run systemctl enable on the unit.

Important

Make sure that any systemd services to be controlled by the cluster are not enabled to start at boot.

4.2.3. Linux Standard Base¶

LSB resource agents, also known as SysV-style, are scripts that provide start, stop, and status actions for a service.

They are provided by some operating system distributions. If a full path is not

given, they are assumed to be located in a directory specified when your

Pacemaker software was built (usually /etc/init.d).

In order to be used with Pacemaker, they must conform to the LSB specification as it relates to init scripts.

Warning

Some LSB scripts do not fully comply with the standard. For details on how to check whether your script is LSB-compatible, see the “Resource Agents” chapter of Pacemaker Administration. Common problems include:

- Not implementing the

statusaction - Not observing the correct exit status codes

- Starting a started resource returns an error

- Stopping a stopped resource returns an error

Important

Make sure the host is not configured to start any LSB services at boot that will be controlled by the cluster.

4.2.4. Upstart¶

Some Linux distributions previously used Upstart for system initialization and service management. Pacemaker is able to manage services using Upstart if the local system supports them and support was enabled when your Pacemaker software was built.

The jobs that specify how services are managed are usually provided by the operating system distribution.

Important

Make sure the host is not configured to start any Upstart services at boot that will be controlled by the cluster.

Warning

Upstart support is deprecated in Pacemaker. Upstart is no longer actively maintained, and test platforms for it are no longer readily usable. Support will be dropped entirely at the next major release of Pacemaker.

4.2.5. System Services¶

Since there are various types of system services (systemd,

upstart, and lsb), Pacemaker supports a special service alias which

intelligently figures out which one applies to a given cluster node.

This is particularly useful when the cluster contains a mix of

systemd, upstart, and lsb.

In order, Pacemaker will try to find the named service as:

- an LSB init script

- a Systemd unit file

- an Upstart job

4.2.7. Nagios Plugins¶

Nagios Plugins [1] are a way to monitor services. Pacemaker can use these as resources, to react to a change in the service’s status.

To use plugins as resources, Pacemaker must have been built with support, and OCF-style meta-data for the plugins must be installed on nodes that can run them. Meta-data for several common plugins is provided by the nagios-agents-metadata project.

The supported parameters for such a resource are same as the long options of the plugin.

Start and monitor actions for plugin resources are implemented as invoking the plugin. A plugin result of “OK” (0) is treated as success, a result of “WARN” (1) is treated as a successful but degraded service, and any other result is considered a failure.

A plugin resource is not going to change its status after recovery by

restarting the plugin, so using them alone does not make sense with on-fail

set (or left to default) to restart. Another value could make sense, for

example, if you want to fence or standby nodes that cannot reach some external

service.

A more common use case for plugin resources is to configure them with a

container meta-attribute set to the name of another resource that actually

makes the service available, such as a virtual machine or container.

With container set, the plugin resource will automatically be colocated

with the containing resource and ordered after it, and the containing resource

will be considered failed if the plugin resource fails. This allows monitoring

of a service inside a virtual machine or container, with recovery of the

virtual machine or container if the service fails.

Configuring a virtual machine as a guest node, or a container as a bundle, is the preferred way of monitoring a service inside, but plugin resources can be useful when it is not practical to modify the virtual machine or container image for this purpose.

4.3. Resource Properties¶

These values tell the cluster which resource agent to use for the resource, where to find that resource agent and what standards it conforms to.

| Field | Description |

|---|---|

| id | Your name for the resource |

| class | The standard the resource agent conforms to. Allowed values:

|

| description | A description of the Resource Agent, intended for local use.

E.g. |

| type | The name of the Resource Agent you wish to use. E.g.

|

| provider | The OCF spec allows multiple vendors to supply the same resource

agent. To use the OCF resource agents supplied by the Heartbeat

project, you would specify |

The XML definition of a resource can be queried with the crm_resource tool. For example:

# crm_resource --resource Email --query-xml

might produce:

A system resource definition

<primitive id="Email" class="service" type="exim"/>

Note

One of the main drawbacks to system services (LSB, systemd or Upstart) resources is that they do not allow any parameters!

An OCF resource definition

<primitive id="Public-IP" class="ocf" type="IPaddr" provider="heartbeat">

<instance_attributes id="Public-IP-params">

<nvpair id="Public-IP-ip" name="ip" value="192.0.2.2"/>

</instance_attributes>

</primitive>

4.4. Resource Options¶

Resources have two types of options: meta-attributes and instance attributes. Meta-attributes apply to any type of resource, while instance attributes are specific to each resource agent.

4.4.1. Resource Meta-Attributes¶

Meta-attributes are used by the cluster to decide how a resource should

behave and can be easily set using the --meta option of the

crm_resource command.

| Field | Default | Description |

|---|---|---|

| priority | 0 | If not all resources can be active, the cluster will stop lower priority resources in order to keep higher priority ones active. |

| critical | true | Use this value as the default for |

| target-role | Started | What state should the cluster attempt to keep this resource in? Allowed values:

|

| is-managed | TRUE | Is the cluster allowed to start and stop

the resource? Allowed values: |

| maintenance | FALSE | Similar to the |

| resource-stickiness | 1 for individual clone instances, 0 for all other resources | A score that will be added to the current node when a resource is already active. This allows running resources to stay where they are, even if they would be placed elsewhere if they were being started from a stopped state. |

| requires | quorum for resources

with a class of stonith,

otherwise unfencing if

unfencing is active in the

cluster, otherwise fencing

if stonith-enabled is true,

otherwise quorum |

Conditions under which the resource can be started. Allowed values:

|

| migration-threshold | INFINITY | How many failures may occur for this resource on

a node, before this node is marked ineligible to

host this resource. A value of 0 indicates that this

feature is disabled (the node will never be marked

ineligible); by constrast, the cluster treats

INFINITY (the default) as a very large but finite

number. This option has an effect only if the

failed operation specifies |

| failure-timeout | 0 | How many seconds to wait before acting as if the failure had not occurred, and potentially allowing the resource back to the node on which it failed. A value of 0 indicates that this feature is disabled. |

| multiple-active | stop_start | What should the cluster do if it ever finds the resource active on more than one node? Allowed values:

|

| allow-migrate | TRUE for ocf:pacemaker:remote resources, FALSE otherwise | Whether the cluster should try to “live migrate” this resource when it needs to be moved (see Migrating Resources) |

| allow-unhealthy-nodes | FALSE | Whether the resource should be able to run on a node even if the node’s health score would otherwise prevent it (see Tracking Node Health) (since 2.1.3) |

| container-attribute-target | Specific to bundle resources; see Bundle Node Attributes | |

| remote-node | The name of the Pacemaker Remote guest node this resource is associated with, if any. If specified, this both enables the resource as a guest node and defines the unique name used to identify the guest node. The guest must be configured to run the Pacemaker Remote daemon when it is started. WARNING: This value cannot overlap with any resource or node IDs. | |

| remote-port | 3121 | If remote-node is specified, the port on the

guest used for its Pacemaker Remote connection.

The Pacemaker Remote daemon on the guest must

be configured to listen on this port. |

| remote-addr | value of remote-node |

If remote-node is specified, the IP

address or hostname used to connect to the

guest via Pacemaker Remote. The Pacemaker Remote

daemon on the guest must be configured to accept

connections on this address. |

| remote-connect-timeout | 60s | If remote-node is specified, how long before

a pending guest connection will time out. |

As an example of setting resource options, if you performed the following commands on an LSB Email resource:

# crm_resource --meta --resource Email --set-parameter priority --parameter-value 100

# crm_resource -m -r Email -p multiple-active -v block

the resulting resource definition might be:

An LSB resource with cluster options

<primitive id="Email" class="lsb" type="exim">

<meta_attributes id="Email-meta_attributes">

<nvpair id="Email-meta_attributes-priority" name="priority" value="100"/>

<nvpair id="Email-meta_attributes-multiple-active" name="multiple-active" value="block"/>

</meta_attributes>

</primitive>

In addition to the cluster-defined meta-attributes described above, you may also configure arbitrary meta-attributes of your own choosing. Most commonly, this would be done for use in rules. For example, an IT department might define a custom meta-attribute to indicate which company department each resource is intended for. To reduce the chance of name collisions with cluster-defined meta-attributes added in the future, it is recommended to use a unique, organization-specific prefix for such attributes.

4.4.2. Setting Global Defaults for Resource Meta-Attributes¶

To set a default value for a resource option, add it to the

rsc_defaults section with crm_attribute. For example,

# crm_attribute --type rsc_defaults --name is-managed --update false

would prevent the cluster from starting or stopping any of the

resources in the configuration (unless of course the individual

resources were specifically enabled by having their is-managed set to

true).

4.4.3. Resource Instance Attributes¶

The resource agents of some resource classes (lsb, systemd and upstart not among them) can be given parameters which determine how they behave and which instance of a service they control.

If your resource agent supports parameters, you can add them with the

crm_resource command. For example,

# crm_resource --resource Public-IP --set-parameter ip --parameter-value 192.0.2.2

would create an entry in the resource like this:

An example OCF resource with instance attributes

<primitive id="Public-IP" class="ocf" type="IPaddr" provider="heartbeat">

<instance_attributes id="params-public-ip">

<nvpair id="public-ip-addr" name="ip" value="192.0.2.2"/>

</instance_attributes>

</primitive>

For an OCF resource, the result would be an environment variable

called OCF_RESKEY_ip with a value of 192.0.2.2.

The list of instance attributes supported by an OCF resource agent can be

found by calling the resource agent with the meta-data command.

The output contains an XML description of all the supported

attributes, their purpose and default values.

Displaying the metadata for the Dummy resource agent template

# export OCF_ROOT=/usr/lib/ocf

# $OCF_ROOT/resource.d/pacemaker/Dummy meta-data

<?xml version="1.0"?>

<!DOCTYPE resource-agent SYSTEM "ra-api-1.dtd">

<resource-agent name="Dummy" version="2.0">

<version>1.1</version>

<longdesc lang="en">

This is a dummy OCF resource agent. It does absolutely nothing except keep track

of whether it is running or not, and can be configured so that actions fail or

take a long time. Its purpose is primarily for testing, and to serve as a

template for resource agent writers.

</longdesc>

<shortdesc lang="en">Example stateless resource agent</shortdesc>

<parameters>

<parameter name="state" unique-group="state">

<longdesc lang="en">

Location to store the resource state in.

</longdesc>

<shortdesc lang="en">State file</shortdesc>

<content type="string" default="/var/run/Dummy-RESOURCE_ID.state" />

</parameter>

<parameter name="passwd" reloadable="1">

<longdesc lang="en">

Fake password field

</longdesc>

<shortdesc lang="en">Password</shortdesc>

<content type="string" default="" />

</parameter>

<parameter name="fake" reloadable="1">

<longdesc lang="en">

Fake attribute that can be changed to cause a reload

</longdesc>

<shortdesc lang="en">Fake attribute that can be changed to cause a reload</shortdesc>

<content type="string" default="dummy" />

</parameter>

<parameter name="op_sleep" reloadable="1">

<longdesc lang="en">

Number of seconds to sleep during operations. This can be used to test how

the cluster reacts to operation timeouts.

</longdesc>

<shortdesc lang="en">Operation sleep duration in seconds.</shortdesc>

<content type="string" default="0" />

</parameter>

<parameter name="fail_start_on" reloadable="1">

<longdesc lang="en">

Start, migrate_from, and reload-agent actions will return failure if running on

the host specified here, but the resource will run successfully anyway (future

monitor calls will find it running). This can be used to test on-fail=ignore.

</longdesc>

<shortdesc lang="en">Report bogus start failure on specified host</shortdesc>

<content type="string" default="" />

</parameter>

<parameter name="envfile" reloadable="1">

<longdesc lang="en">

If this is set, the environment will be dumped to this file for every call.

</longdesc>

<shortdesc lang="en">Environment dump file</shortdesc>

<content type="string" default="" />

</parameter>

</parameters>

<actions>

<action name="start" timeout="20s" />

<action name="stop" timeout="20s" />

<action name="monitor" timeout="20s" interval="10s" depth="0"/>

<action name="reload" timeout="20s" />

<action name="reload-agent" timeout="20s" />

<action name="migrate_to" timeout="20s" />

<action name="migrate_from" timeout="20s" />

<action name="validate-all" timeout="20s" />

<action name="meta-data" timeout="5s" />

</actions>

</resource-agent>

4.5. Resource Operations¶

Operations are actions the cluster can perform on a resource by calling the resource agent. Resource agents must support certain common operations such as start, stop, and monitor, and may implement any others.

Operations may be explicitly configured for two purposes: to override defaults for options (such as timeout) that the cluster will use whenever it initiates the operation, and to run an operation on a recurring basis (for example, to monitor the resource for failure).

An OCF resource with a non-default start timeout

<primitive id="Public-IP" class="ocf" type="IPaddr" provider="heartbeat">

<operations>

<op id="Public-IP-start" name="start" timeout="60s"/>

</operations>

<instance_attributes id="params-public-ip">

<nvpair id="public-ip-addr" name="ip" value="192.0.2.2"/>

</instance_attributes>

</primitive>

Pacemaker identifies operations by a combination of name and interval, so this combination must be unique for each resource. That is, you should not configure two operations for the same resource with the same name and interval.

4.5.1. Operation Properties¶

Operation properties may be specified directly in the op element as

XML attributes, or in a separate meta_attributes block as nvpair elements.

XML attributes take precedence over nvpair elements if both are specified.

| Field | Default | Description |

|---|---|---|

| id | A unique name for the operation. |

|

| name | The action to perform. This can be any action

supported by the agent; common values include

|

|

| interval | 0 | How frequently (in seconds) to perform the operation. A value of 0 means “when needed”. A positive value defines a recurring action, which is typically used with monitor. |

| timeout | How long to wait before declaring the action has failed |

|

| on-fail | Varies by action:

|

The action to take if this action ever fails. Allowed values:

|

| enabled | TRUE | If |

| record-pending | TRUE | If |

| role | Run the operation only on node(s) that the cluster

thinks should be in the specified role. This only

makes sense for recurring |

Note

When on-fail is set to demote, recovery from failure by a successful

demote causes the cluster to recalculate whether and where a new instance

should be promoted. The node with the failure is eligible, so if promotion

scores have not changed, it will be promoted again.

There is no direct equivalent of migration-threshold for the promoted

role, but the same effect can be achieved with a location constraint using a

rule with a node attribute expression for the resource’s fail

count.

For example, to immediately ban the promoted role from a node with any failed promote or promoted instance monitor:

<rsc_location id="loc1" rsc="my_primitive">

<rule id="rule1" score="-INFINITY" role="Promoted" boolean-op="or">

<expression id="expr1" attribute="fail-count-my_primitive#promote_0"

operation="gte" value="1"/>

<expression id="expr2" attribute="fail-count-my_primitive#monitor_10000"

operation="gte" value="1"/>

</rule>

</rsc_location>

This example assumes that there is a promotable clone of the my_primitive

resource (note that the primitive name, not the clone name, is used in the

rule), and that there is a recurring 10-second-interval monitor configured for

the promoted role (fail count attributes specify the interval in

milliseconds).

4.5.2. Monitoring Resources for Failure¶

When Pacemaker first starts a resource, it runs one-time monitor operations

(referred to as probes) to ensure the resource is running where it’s

supposed to be, and not running where it’s not supposed to be. (This behavior

can be affected by the resource-discovery location constraint property.)

Other than those initial probes, Pacemaker will not (by default) check that

the resource continues to stay healthy [2]. You must configure monitor

operations explicitly to perform these checks.

An OCF resource with a recurring health check

<primitive id="Public-IP" class="ocf" type="IPaddr" provider="heartbeat">

<operations>

<op id="Public-IP-start" name="start" timeout="60s"/>

<op id="Public-IP-monitor" name="monitor" interval="60s"/>

</operations>

<instance_attributes id="params-public-ip">

<nvpair id="public-ip-addr" name="ip" value="192.0.2.2"/>

</instance_attributes>

</primitive>

By default, a monitor operation will ensure that the resource is running

where it is supposed to. The target-role property can be used for further

checking.

For example, if a resource has one monitor operation with

interval=10 role=Started and a second monitor operation with

interval=11 role=Stopped, the cluster will run the first monitor on any nodes

it thinks should be running the resource, and the second monitor on any nodes

that it thinks should not be running the resource (for the truly paranoid,

who want to know when an administrator manually starts a service by mistake).

Note

Currently, monitors with role=Stopped are not implemented for

clone resources.

4.5.3. Monitoring Resources When Administration is Disabled¶

Recurring monitor operations behave differently under various administrative

settings:

When a resource is unmanaged (by setting

is-managed=false): No monitors will be stopped.If the unmanaged resource is stopped on a node where the cluster thinks it should be running, the cluster will detect and report that it is not, but it will not consider the monitor failed, and will not try to start the resource until it is managed again.

Starting the unmanaged resource on a different node is strongly discouraged and will at least cause the cluster to consider the resource failed, and may require the resource’s

target-roleto be set toStoppedthenStartedto be recovered.When a resource is put into maintenance mode (by setting

maintenance=true): The resource will be marked as unmanaged. (This overridesis-managed=true.)Additionally, all monitor operations will be stopped, except those specifying

roleasStopped(which will be newly initiated if appropriate). As with unmanaged resources in general, starting a resource on a node other than where the cluster expects it to be will cause problems.When a node is put into standby: All resources will be moved away from the node, and all

monitoroperations will be stopped on the node, except those specifyingroleasStopped(which will be newly initiated if appropriate).When a node is put into maintenance mode: All resources that are active on the node will be marked as in maintenance mode. See above for more details.

When the cluster is put into maintenance mode: All resources in the cluster will be marked as in maintenance mode. See above for more details.

A resource is in maintenance mode if the cluster, the node where the resource is active, or the resource itself is configured to be in maintenance mode. If a resource is in maintenance mode, then it is also unmanaged. However, if a resource is unmanaged, it is not necessarily in maintenance mode.

4.5.4. Setting Global Defaults for Operations¶

You can change the global default values for operation properties

in a given cluster. These are defined in an op_defaults section

of the CIB’s configuration section, and can be set with

crm_attribute. For example,

# crm_attribute --type op_defaults --name timeout --update 20s

would default each operation’s timeout to 20 seconds. If an

operation’s definition also includes a value for timeout, then that

value would be used for that operation instead.

4.5.5. When Implicit Operations Take a Long Time¶

The cluster will always perform a number of implicit operations: start,

stop and a non-recurring monitor operation used at startup to check

whether the resource is already active. If one of these is taking too long,

then you can create an entry for them and specify a longer timeout.

An OCF resource with custom timeouts for its implicit actions

<primitive id="Public-IP" class="ocf" type="IPaddr" provider="heartbeat">

<operations>

<op id="public-ip-startup" name="monitor" interval="0" timeout="90s"/>

<op id="public-ip-start" name="start" interval="0" timeout="180s"/>

<op id="public-ip-stop" name="stop" interval="0" timeout="15min"/>

</operations>

<instance_attributes id="params-public-ip">

<nvpair id="public-ip-addr" name="ip" value="192.0.2.2"/>

</instance_attributes>

</primitive>

4.5.6. Multiple Monitor Operations¶

Provided no two operations (for a single resource) have the same name

and interval, you can have as many monitor operations as you like.

In this way, you can do a superficial health check every minute and

progressively more intense ones at higher intervals.

To tell the resource agent what kind of check to perform, you need to

provide each monitor with a different value for a common parameter.

The OCF standard creates a special parameter called OCF_CHECK_LEVEL

for this purpose and dictates that it is “made available to the

resource agent without the normal OCF_RESKEY prefix”.

Whatever name you choose, you can specify it by adding an

instance_attributes block to the op tag. It is up to each

resource agent to look for the parameter and decide how to use it.

An OCF resource with two recurring health checks, performing

different levels of checks specified via OCF_CHECK_LEVEL.

<primitive id="Public-IP" class="ocf" type="IPaddr" provider="heartbeat">

<operations>

<op id="public-ip-health-60" name="monitor" interval="60">

<instance_attributes id="params-public-ip-depth-60">

<nvpair id="public-ip-depth-60" name="OCF_CHECK_LEVEL" value="10"/>

</instance_attributes>

</op>

<op id="public-ip-health-300" name="monitor" interval="300">

<instance_attributes id="params-public-ip-depth-300">

<nvpair id="public-ip-depth-300" name="OCF_CHECK_LEVEL" value="20"/>

</instance_attributes>

</op>

</operations>

<instance_attributes id="params-public-ip">

<nvpair id="public-ip-level" name="ip" value="192.0.2.2"/>

</instance_attributes>

</primitive>

4.5.7. Disabling a Monitor Operation¶

The easiest way to stop a recurring monitor is to just delete it.

However, there can be times when you only want to disable it

temporarily. In such cases, simply add enabled=false to the

operation’s definition.

Example of an OCF resource with a disabled health check

<primitive id="Public-IP" class="ocf" type="IPaddr" provider="heartbeat">

<operations>

<op id="public-ip-check" name="monitor" interval="60s" enabled="false"/>

</operations>

<instance_attributes id="params-public-ip">

<nvpair id="public-ip-addr" name="ip" value="192.0.2.2"/>

</instance_attributes>

</primitive>

This can be achieved from the command line by executing:

# cibadmin --modify --xml-text '<op id="public-ip-check" enabled="false"/>'

Once you’ve done whatever you needed to do, you can then re-enable it with

# cibadmin --modify --xml-text '<op id="public-ip-check" enabled="true"/>'

| [1] | The project has two independent forks, hosted at https://www.nagios-plugins.org/ and https://www.monitoring-plugins.org/. Output from both projects’ plugins is similar, so plugins from either project can be used with pacemaker. |

| [2] | Currently, anyway. Automatic monitoring operations may be added in a future version of Pacemaker. |

5. Resource Constraints¶

5.1. Scores¶

Scores of all kinds are integral to how the cluster works. Practically everything from moving a resource to deciding which resource to stop in a degraded cluster is achieved by manipulating scores in some way.

Scores are calculated per resource and node. Any node with a negative score for a resource can’t run that resource. The cluster places a resource on the node with the highest score for it.

5.1.1. Infinity Math¶

Pacemaker implements INFINITY (or equivalently, +INFINITY) internally as a score of 1,000,000. Addition and subtraction with it follow these three basic rules:

- Any value + INFINITY = INFINITY

- Any value - INFINITY = -INFINITY

- INFINITY - INFINITY = -INFINITY

Note

What if you want to use a score higher than 1,000,000? Typically this possibility arises when someone wants to base the score on some external metric that might go above 1,000,000.

The short answer is you can’t.

The long answer is it is sometimes possible work around this limitation creatively. You may be able to set the score to some computed value based on the external metric rather than use the metric directly. For nodes, you can store the metric as a node attribute, and query the attribute when computing the score (possibly as part of a custom resource agent).

5.2. Deciding Which Nodes a Resource Can Run On¶

Location constraints tell the cluster which nodes a resource can run on.

There are two alternative strategies. One way is to say that, by default, resources can run anywhere, and then the location constraints specify nodes that are not allowed (an opt-out cluster). The other way is to start with nothing able to run anywhere, and use location constraints to selectively enable allowed nodes (an opt-in cluster).

Whether you should choose opt-in or opt-out depends on your personal preference and the make-up of your cluster. If most of your resources can run on most of the nodes, then an opt-out arrangement is likely to result in a simpler configuration. On the other-hand, if most resources can only run on a small subset of nodes, an opt-in configuration might be simpler.

5.2.1. Location Properties¶

| Attribute | Default | Description |

|---|---|---|

| id | A unique name for the constraint (required) |

|

| rsc | The name of the resource to which this constraint

applies. A location constraint must either have a

|

|

| rsc-pattern | A pattern matching the names of resources to which

this constraint applies. The syntax is the same as

POSIX

extended regular expressions, with the addition of an

initial |

|

| node | The name of the node to which this constraint applies.

A location constraint must either have a |

|

| score | Positive values indicate a preference for running the

affected resource(s) on |

|

| resource-discovery | always | Whether Pacemaker should perform resource discovery (that is, check whether the resource is already running) for this resource on this node. This should normally be left as the default, so that rogue instances of a service can be stopped when they are running where they are not supposed to be. However, there are two situations where disabling resource discovery is a good idea: when a service is not installed on a node, discovery might return an error (properly written OCF agents will not, so this is usually only seen with other agent types); and when Pacemaker Remote is used to scale a cluster to hundreds of nodes, limiting resource discovery to allowed nodes can significantly boost performance.

|

Warning

Setting resource-discovery to never or exclusive removes Pacemaker’s

ability to detect and stop unwanted instances of a service running

where it’s not supposed to be. It is up to the system administrator (you!)

to make sure that the service can never be active on nodes without

resource-discovery (such as by leaving the relevant software uninstalled).

5.2.2. Asymmetrical “Opt-In” Clusters¶

To create an opt-in cluster, start by preventing resources from running anywhere by default:

# crm_attribute --name symmetric-cluster --update false

Then start enabling nodes. The following fragment says that the web server prefers sles-1, the database prefers sles-2 and both can fail over to sles-3 if their most preferred node fails.

Opt-in location constraints for two resources

<constraints>

<rsc_location id="loc-1" rsc="Webserver" node="sles-1" score="200"/>

<rsc_location id="loc-2" rsc="Webserver" node="sles-3" score="0"/>

<rsc_location id="loc-3" rsc="Database" node="sles-2" score="200"/>

<rsc_location id="loc-4" rsc="Database" node="sles-3" score="0"/>

</constraints>

5.2.3. Symmetrical “Opt-Out” Clusters¶

To create an opt-out cluster, start by allowing resources to run anywhere by default:

# crm_attribute --name symmetric-cluster --update true

Then start disabling nodes. The following fragment is the equivalent of the above opt-in configuration.

Opt-out location constraints for two resources

<constraints>

<rsc_location id="loc-1" rsc="Webserver" node="sles-1" score="200"/>

<rsc_location id="loc-2-do-not-run" rsc="Webserver" node="sles-2" score="-INFINITY"/>

<rsc_location id="loc-3-do-not-run" rsc="Database" node="sles-1" score="-INFINITY"/>

<rsc_location id="loc-4" rsc="Database" node="sles-2" score="200"/>

</constraints>

5.2.4. What if Two Nodes Have the Same Score¶

If two nodes have the same score, then the cluster will choose one. This choice may seem random and may not be what was intended, however the cluster was not given enough information to know any better.

Constraints where a resource prefers two nodes equally

<constraints>

<rsc_location id="loc-1" rsc="Webserver" node="sles-1" score="INFINITY"/>

<rsc_location id="loc-2" rsc="Webserver" node="sles-2" score="INFINITY"/>

<rsc_location id="loc-3" rsc="Database" node="sles-1" score="500"/>

<rsc_location id="loc-4" rsc="Database" node="sles-2" score="300"/>

<rsc_location id="loc-5" rsc="Database" node="sles-2" score="200"/>

</constraints>

In the example above, assuming no other constraints and an inactive cluster, Webserver would probably be placed on sles-1 and Database on sles-2. It would likely have placed Webserver based on the node’s uname and Database based on the desire to spread the resource load evenly across the cluster. However other factors can also be involved in more complex configurations.

5.2.5. Specifying locations using pattern matching¶

A location constraint can affect all resources whose IDs match a given pattern. The following example bans resources named ip-httpd, ip-asterisk, ip-gateway, etc., from node1.

Location constraint banning all resources matching a pattern from one node

<constraints>

<rsc_location id="ban-ips-from-node1" rsc-pattern="ip-.*" node="node1" score="-INFINITY"/>

</constraints>

5.3. Specifying the Order in which Resources Should Start/Stop¶

Ordering constraints tell the cluster the order in which certain resource actions should occur.

Important

Ordering constraints affect only the ordering of resource actions; they do not require that the resources be placed on the same node. If you want resources to be started on the same node and in a specific order, you need both an ordering constraint and a colocation constraint (see Placing Resources Relative to other Resources), or alternatively, a group (see Groups - A Syntactic Shortcut).

5.3.1. Ordering Properties¶

| Field | Default | Description |

|---|---|---|

| id | A unique name for the constraint |

|

| first | Name of the resource that the |

|

| then | Name of the dependent resource |

|

| first-action | start | The action that the |

| then-action | value of first-action |

The action that the |

| kind | Mandatory | How to enforce the constraint. Allowed values:

|

| symmetrical | TRUE for Mandatory and

Optional kinds. FALSE

for Serialize kind. |

If true, the reverse of the constraint applies for the

opposite action (for example, if B starts after A starts,

then B stops before A stops). |

Promote and demote apply to promotable

clone resources.

5.3.2. Optional and mandatory ordering¶

Here is an example of ordering constraints where Database must start before Webserver, and IP should start before Webserver if they both need to be started:

Optional and mandatory ordering constraints

<constraints>

<rsc_order id="order-1" first="IP" then="Webserver" kind="Optional"/>

<rsc_order id="order-2" first="Database" then="Webserver" kind="Mandatory" />

</constraints>

Because the above example lets symmetrical default to TRUE, Webserver

must be stopped before Database can be stopped, and Webserver should be

stopped before IP if they both need to be stopped.

5.4. Placing Resources Relative to other Resources¶

Colocation constraints tell the cluster that the location of one resource depends on the location of another one.

Colocation has an important side-effect: it affects the order in which resources are assigned to a node. Think about it: You can’t place A relative to B unless you know where B is [1].

So when you are creating colocation constraints, it is important to consider whether you should colocate A with B, or B with A.

Important

Colocation constraints affect only the placement of resources; they do not require that the resources be started in a particular order. If you want resources to be started on the same node and in a specific order, you need both an ordering constraint (see Specifying the Order in which Resources Should Start/Stop) and a colocation constraint, or alternatively, a group (see Groups - A Syntactic Shortcut).

5.4.1. Colocation Properties¶

| Field | Default | Description |

|---|---|---|

| id | A unique name for the constraint (required). |

|

| rsc | The name of a resource that should be located

relative to |

|

| with-rsc | The name of the resource used as the colocation

target. The cluster will decide where to put this

resource first and then decide where to put |

|

| node-attribute | #uname | If |

| score | 0 | Positive values indicate the resources should run on

the same node. Negative values indicate the resources

should run on different nodes. Values of

+/- |

| rsc-role | Started | If |

| with-rsc-role | Started | If |

| influence | value of

critical

meta-attribute

for rsc |

Whether to consider the location preferences of

|

5.4.2. Mandatory Placement¶

Mandatory placement occurs when the constraint’s score is

+INFINITY or -INFINITY. In such cases, if the constraint can’t be

satisfied, then the rsc resource is not permitted to run. For

score=INFINITY, this includes cases where the with-rsc resource is

not active.

If you need resource A to always run on the same machine as resource B, you would add the following constraint:

Mandatory colocation constraint for two resources

<rsc_colocation id="colocate" rsc="A" with-rsc="B" score="INFINITY"/>

Remember, because INFINITY was used, if B can’t run on any of the cluster nodes (for whatever reason) then A will not be allowed to run. Whether A is running or not has no effect on B.

Alternatively, you may want the opposite – that A cannot

run on the same machine as B. In this case, use score="-INFINITY".

Mandatory anti-colocation constraint for two resources

<rsc_colocation id="anti-colocate" rsc="A" with-rsc="B" score="-INFINITY"/>

Again, by specifying -INFINITY, the constraint is binding. So if the only place left to run is where B already is, then A may not run anywhere.

As with INFINITY, B can run even if A is stopped. However, in this case A also can run if B is stopped, because it still meets the constraint of A and B not running on the same node.

5.4.3. Advisory Placement¶

If mandatory placement is about “must” and “must not”, then advisory placement is the “I’d prefer if” alternative.

For colocation constraints with scores greater than -INFINITY and less than INFINITY, the cluster will try to accommodate your wishes, but may ignore them if other factors outweigh the colocation score. Those factors might include other constraints, resource stickiness, failure thresholds, whether other resources would be prevented from being active, etc.

Advisory colocation constraint for two resources

<rsc_colocation id="colocate-maybe" rsc="A" with-rsc="B" score="500"/>

5.4.4. Colocation by Node Attribute¶

The node-attribute property of a colocation constraints allows you to express

the requirement, “these resources must be on similar nodes”.

As an example, imagine that you have two Storage Area Networks (SANs) that are

not controlled by the cluster, and each node is connected to one or the other.

You may have two resources r1 and r2 such that r2 needs to use the same

SAN as r1, but doesn’t necessarily have to be on the same exact node.

In such a case, you could define a node attribute named

san, with the value san1 or san2 on each node as appropriate. Then, you

could colocate r2 with r1 using node-attribute set to san.

5.4.5. Colocation Influence¶

By default, if A is colocated with B, the cluster will take into account A’s preferences when deciding where to place B, to maximize the chance that both resources can run.

For a detailed look at exactly how this occurs, see Colocation Explained.

However, if influence is set to false in the colocation constraint,

this will happen only if B is inactive and needing to be started. If B is

already active, A’s preferences will have no effect on placing B.

An example of what effect this would have and when it would be desirable would

be a nonessential reporting tool colocated with a resource-intensive service

that takes a long time to start. If the reporting tool fails enough times to

reach its migration threshold, by default the cluster will want to move both

resources to another node if possible. Setting influence to false on

the colocation constraint would mean that the reporting tool would be stopped

in this situation instead, to avoid forcing the service to move.

The critical resource meta-attribute is a convenient way to specify the

default for all colocation constraints and groups involving a particular

resource.

Note

If a noncritical resource is a member of a group, all later members of the group will be treated as noncritical, even if they are marked as (or left to default to) critical.

5.5. Resource Sets¶

Resource sets allow multiple resources to be affected by a single constraint.

A set of 3 resources

<resource_set id="resource-set-example">

<resource_ref id="A"/>

<resource_ref id="B"/>

<resource_ref id="C"/>

</resource_set>

Resource sets are valid inside rsc_location, rsc_order

(see Ordering Sets of Resources), rsc_colocation

(see Colocating Sets of Resources), and rsc_ticket

(see Configuring Ticket Dependencies) constraints.

A resource set has a number of properties that can be set, though not all have an effect in all contexts.

| Field | Default | Description |

|---|---|---|

| id | A unique name for the set (required) |

|

| sequential | true | Whether the members of the set must be acted on in

order. Meaningful within |

| require-all | true | Whether all members of the set must be active before

continuing. With the current implementation, the

cluster may continue even if only one member of the

set is started, but if more than one member of the set

is starting at the same time, the cluster will still

wait until all of those have started before continuing

(this may change in future versions). Meaningful

within |

| role | The constraint applies only to resource set members

that are Promotable clones in this

role. Meaningful within |

|

| action | value of

first-action

in the enclosing

ordering

constraint |

The action that applies to all members of the set.

Meaningful within |

| score | Advanced use only. Use a specific score for this set within the constraint. |

5.6. Ordering Sets of Resources¶

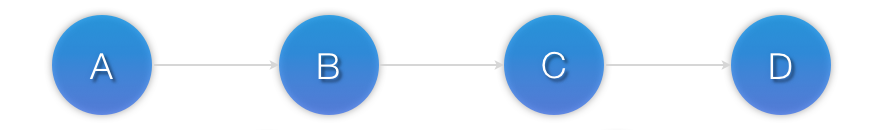

A common situation is for an administrator to create a chain of ordered resources, such as:

A chain of ordered resources

<constraints>

<rsc_order id="order-1" first="A" then="B" />

<rsc_order id="order-2" first="B" then="C" />

<rsc_order id="order-3" first="C" then="D" />

</constraints>

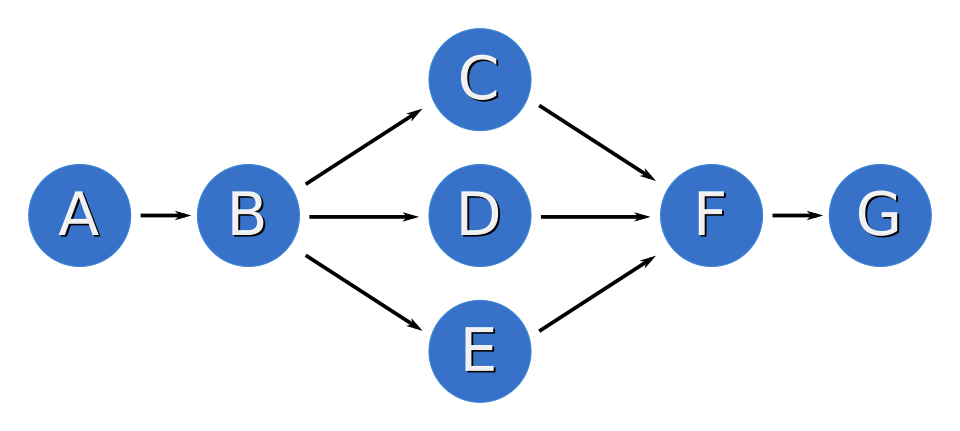

Visual representation of the four resources’ start order for the above constraints

5.6.1. Ordered Set¶

To simplify this situation, Resource Sets can be used within ordering constraints:

A chain of ordered resources expressed as a set

<constraints>

<rsc_order id="order-1">

<resource_set id="ordered-set-example" sequential="true">

<resource_ref id="A"/>